There are many reasons why, sometimes, you might notice a drop in your organic traffic.

Many studies prove it: SEO is one of the most powerful web marketing strategy. Although there are many benefits to SEO, some elements can jeopardize it and make your strategy fail, despite working perfectly well for years. Here are the 9 main reasons why your SEO strategy isn’t getting you the expected results and why your website is showing a drop in organic traffic.

How to identify a drop in organic Google search traffic

Anyone who wants to implement a strategy will eventually talk about KPIs and tools to track its performance, to identify changes and to be bale to take action as quickly as possible to correct them. This is why, we advise you to use these two powerful Google tools and to combine them with others to have a full overview of your activity performance.

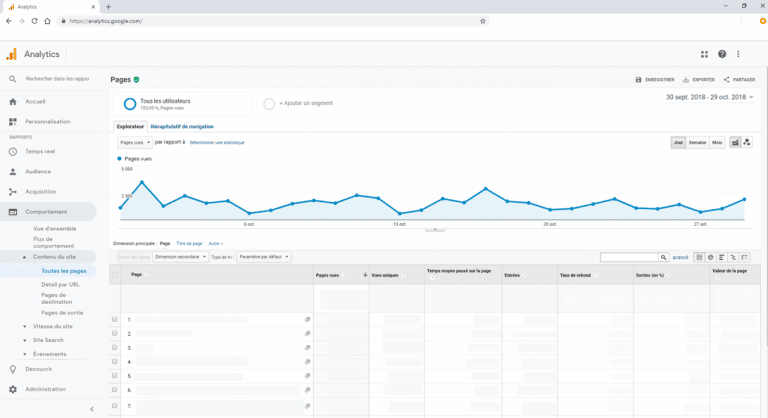

Google Analytics for an overview of your traffic and conversions

Your traffic and sales are great indicators of the quality of your SEO strategy. Google Analytics allows you to track the leads coming from organic traffic, as well as the resulting turnover. By identifying the pages that rank the best and drive the most traffic to your website you can adapt your marketing strategy accordingly. The ideal is to design your own dashboards based on your objectives and to segment your audience to identify which target you should work on to increase your results.

From the launch of your website or during a redesign, it is important to link it to your Google Analytics account to start collecting data as soon as possible.

If you notice a significant decrease in traffic, you first have to define whether it’s mainly your SEO traffic that is impacted, only some webpages or your overall traffic. The solution to implement will depend on the situation.

However, be careful: Google Analytics’ data is still less specific than the Search Console. There are many reasons for this inaccuracy: server errors, JavaScript was not loaded by the user etc. Therefore, it’s best to combine these two tools for a global view of your website’s performance.

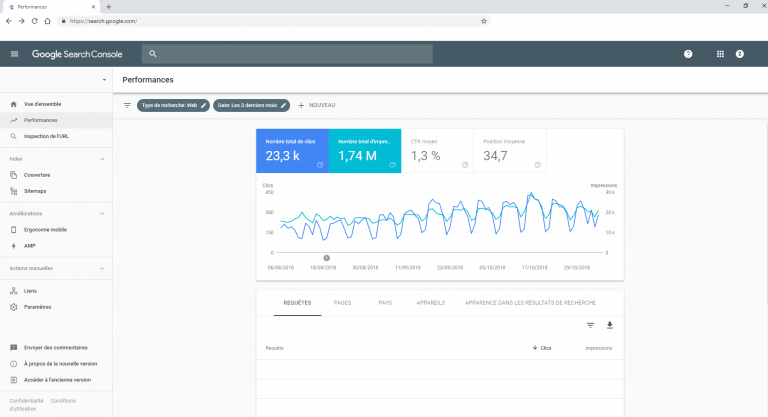

Google Search Console for monitoring the evolution of your KPIs

Essential for any SEO expert, the Google Search Console (formerly Google Webmaster Tools) allows you to keep an eye on the evolution of your different KPIs and to easily take action on your website in just a few clicks. It is also the only tool to give you precise click rates coming from organic traffic.

You can precisely know:

- the keywords on which your website ranks and those generating the most traffic;

- the monitoring of your positioning based on your strategic keywords;

- the CTR (click rate) of the different links. If the click percentage is low, that might mean that you need to optimize your Title and Meta tags better;

- the different backlinks aimed at your website and assess the quality of your netlinking strategy.

With this tool, you can quickly see whether your website has errors on sitemap.xml or robots.txt files, if it has been hit by a Google penalty or even hacked.

Ranking monitoring tools

If you track your rankings daily, you will quickly identify a decrease in traffic and fix the situation immediately. To do so, we advise you to list the top 20 strategic key phrases that drive the most traffic. If you notice significant changes two days in a row, this might mean that something is not working in your strategy!

Among the different tools available to you, you can use Ahrefs, SEMrush, Google Search Console or even tracking tools specifically designed for your needs, either by your SEO agency or internally by your R&D department.

At Semji, we created our own tool with all the indicators that need to be monitored to provide you with comprehensive dashboards for a live tracking of your website.

What are the reasons for a drop in SEO traffic?

Google algorhithms have recently been updated

A sudden drop in traffic, might also mean a change in algorithms. Google communicates in advance when its algorithms will be updated, but only quite vaguely and usually the content of an update is only discovered when the first significant sanctions affect websites, but no-one knows the exact content of the update in advance, even if everyone is aware of the consequences: an increase in rankings or a drop on the SERP, even de-indexing etc. Some websites might also not see any change.

It’s obvious that your website can’t evolve with each change of algorithm and the reason is simple: Google makes changes to its algorhithms more than 1,500 times a year! It’s not always easy to make the necessary changes before an algorithm cracks down.

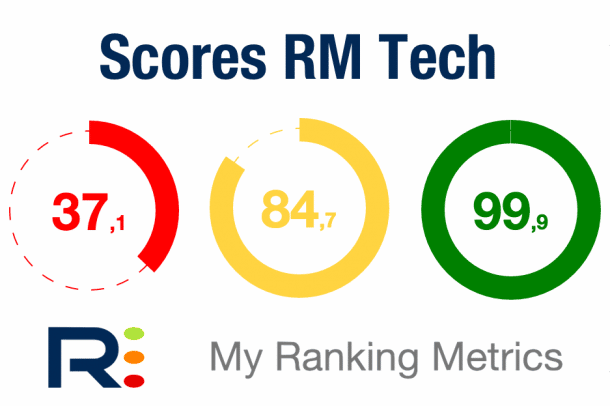

In order not to fear algorithm updates, the best advice to apply is to build ALL your website FOR online users! Thanks to an RM Tech audit you can check the quality of your website and its content and improve it along the way.

Your website is inaccessible

A website inaccessible by either or both Google and users, will be damaging for your SEO.

Did you plan a midnight maintenance of your website, thinking that no one will visit it at that time? You’ve got it wrong. Of course, your users won’t be up browsing your website, but that’s not the case with Googlebots, they never sleep! And if they can’t crawl your website when they’ve decided to do so, they won’t hesitate to penalize your website.

From the user’s side, an inaccessible website due to a server breakdown or updating error is also extremely negative. The error type displayed when you try to get onto your website allows you to identify the origin of the problem: files and directories, syntax error in the .htaccess file, an overcrowded server waiting list, etc. In any case, if the problem comes from your hosting company and happens often, you might want to consider going for a competitor!

In addition, your website might be extremely slow to load, to the point that your visitors are discouraged and will quickly go to one of your competitor’s website to buy from. Studies show that after 3 seconds loading time 50% of visitors leave a website. Website performance can impact your SEO positively or negatively. So, be careful!

Your website has a crawling or indexing problem

Another important thing to check is if your website ranks properly in search results? If that isn’t the case, take a look at file settings that allow for crawling and tell robots how to proceed, which pages and content should be preferred or ignored. You will find this in robots.txt and sitemap.xml files. Don’t forget to take a look at server logs which might reveal some precious information!

As far as the robots.txt file is concerned, if it’s not filled in, no Googlebot will visit your website! And your website won’t be crawled. If it includes wrong instructions, you can clearly deprive yourself from a huge amount of traffic!

If this first step has been properly set up, the problem might come from the sitemap.xml file, which may contain errors. If your website’s URLs are not indexed, make sure you compare the number of URLs listed in the sitemap.xml file and the number of URLs actually indexed.

Perhaps only the mobile website version is concerned, or maybe the Mobile First Index is the one negatively impacting you. Once again, the Search Console will help you point down the problem.

If crawling is blocked, the indexing of your webpages is impossible and therefore visitors have no chance of accessing your website.

If you are undergoing an SEO makeover, poorly done 301 redirects can have a negative impact on your traffic. Any visitor trying to access your website through older URLs won’t be able to, and will land on a 404 error page. If you have backlinks but haven’t sent the new URLs to the websites from which the backlinks originate, or asked for a redirection, all the potential traffic from these websites will be lost.

You’ve been hit by a Google penalty

A website that has been hit by a Google penalty is going to see a significant drop in organic traffic. Here are two specific cases:

- either the website is going to lose SERP positions and if the most strategic pages are affected, then this will have a serious impact,

- or it quite simply won’t appear in the search engine anymore.

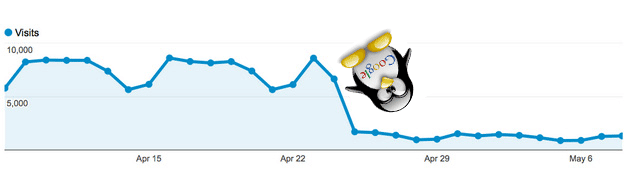

Most Google penalties affecting a website are caused by the Panda, Penguin and Fred algorhithm, but they can also come from a manual action by a real human!

If your website is subject to a Panda penalty, your content is considered to be of poor quality (duplicate content, price comparison tool, link farms).

If you’ve been hit by Penguin, it means that you have been over-optimizing. This may be due to poor quality backlinksor a link acquisition strategy that doesn’t comply with Google guidelines (purchase, exchange, suspicious frequency in new links etc.).

Last but not least, the Fred algorithm hunts out websites which make an abusive use of advertising and only seek to generate income, to the detriment of content quality for users. This can be due to too many low-quality backlinks, too much spam and advertisements in relation to content, over-optimized anchors or badly defined siloing which makes browsing difficult. A website hit by a Fred penalty might see its traffic drop by 50%: if this is your case, a good clean-up will be necessary!

No matter which algorithm you’ve upset, you can identify the nature of the penalty using the Search Console and start getting your website back on track. Then, you can request a review of your website to get it back up on the SERP as soon as possible. In any case, it’s important to act QUICKLY!

Your competitors’ websites have overtaken you

It’s the same battle for everyone on Google (and in real life too!): to get ahead of your competitors. If you’re thinking about this, tell yourself that it’s the same for your competitors!

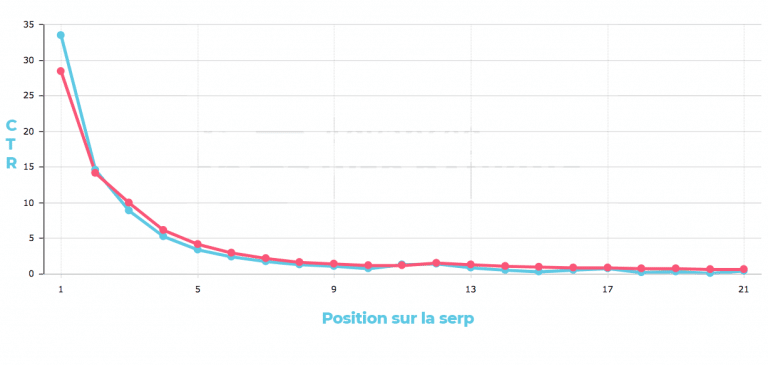

Websites ranking in the top 3 of the SERP get 80% of all clicks of a search results page. If your website was ranking in the top 3 and suddenly ranks down to the 4th or 5th position, you will quickly see a drop in the organic traffic you were used to get. Even worse, if you rank on the second page of search results, your traffic and visibility will be significantly impacted!

You are the victim of a Negative SEO campaign

If you’re hit by Negative SEO, it might be the result of someone trying to hurt you on purpose. The aim of such attack is to outrank you and deindex your website. But who could benefit from your website’s deindexing? Look no further than your competitors. Why? Because using Negative SEO techniques against a website takes time, energy and sometimes even money – but sometimes outranking industry leaders is well worth such effort!

Negative SEO uses Black Hat SEO techniques. The aim is to artificially increase a website’s ranking by using and abusing techniques that go against Google’s guidelines – which will then heavily sanction the targeted website! A sudden increase in poor backlinks, a penalty warning on the Search Console or even the instant drop in your traffic are signals which should alert you.

A Negative SEO attack is difficult to foresee, but here are some tips than can help you quickly identify it:

- regularly audit your website:

- keep an eye on your netlinking at all times;

- regularly check for duplicate content which could be copied from your website.

As a result of Negative SEO actions, the reputation you took so long to build can collapse in no time: extra care is needed!

Your website has been hacked

Unlike Negative SEO that aims to harm you personally, SEO hacking is a money-driven hack that will damage your website’s SEO score. For example, a hacker identifies security holes in your website and uses them to create hundreds of webpages in order to make money through affiliate marketing. The hacker can run ads on thousands of pages created for this purpose, without you even noticing it.

To avoid ending up in this situation, remember to make regular updates of your website, but also of the plugins you use. This will allow you to quickly patch the different flaws. If you use a template on a CMS, don’t forget to check its vulnerability!

All websites that are hacked, no matter the kind, will see their SEO score drop sharply on Google. Once again, stay alert using Analytics, Search Console and ranking tracking tools. They will allow you to react quickly, if not to avoid the disaster.

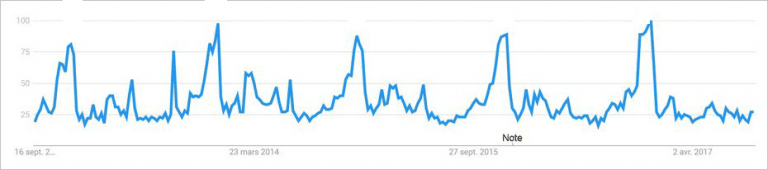

Your sales are seasonal

Before panicking because visitors seem to be abandoning your website, try to see if your sales are seasonal or not. A website to hire ski gear will see much more traffic during the winter months than in the middle of August. For a website selling ice cream, the opposite will be true. Some event websites can also see large variations in traffic: don’t panic, it’s normal!

The best thing to do is to compare your last years’ KPIs over the same period, only then can you know if your website is truly seeing an unusual drop in traffic or if its only seasonal.

Google Trends can also give you precious information on changes in query search volumes. This tool allows you to check for trends and therefore see whether your situation is in line with these or not!

How to react to a loss of rankings on Google

The loss of organic traffic is an uncomfortable situation that can hit any website during its lifetime. You are never safe from it! If you are affected, it is essential to know what to do to limit the damage, but also to surround yourself with SEO experts who are used to dealing with this kind of situation.

First, the objective is to identify precisely the origin of your traffic drop: is it technical or on purpose? This data is essential to know where and how to proceed. By making a daily report of your traffic and your ranking, you will be able to detect the disaster before it happens!

Setting up alerts is also a great way of staying proactive — you will be able to quickly detect negative SEO actions. While you’re at it, keep an eye on the dates of algorithm updates: if they match the date of the drop in traffic, then you know what you have to do…

As soon as you notice a change that could lead to a loss of rankings, don’t wait to react. A proactive attitude is the best way to prevent a drop in traffic, so stay alert and be ready to act at any time!